7.0 KiB

Basic Guide

This guide will show you how to use a pretrained model in an example Unity environment, and show you how to train the model yourself.

If you are not familiar with the Unity Engine, we highly recommend the Roll-a-ball tutorial to learn all the basic concepts of Unity.

Setting up the ML-Agents Toolkit within Unity

In order to use the ML-Agents toolkit within Unity, you need to change some Unity settings first. Also TensorFlowSharp plugin is needed for you to use pretrained model within Unity, which is based on the TensorFlowSharp repo.

- Launch Unity

- On the Projects dialog, choose the Open option at the top of the window.

- Using the file dialog that opens, locate the

MLAgentsSDKfolder within the the ML-Agents toolkit project and click Open. - Go to Edit > Project Settings > Player

- For each of the platforms you target (PC, Mac and Linux Standalone,

iOS or Android):

- Option the Other Settings section.

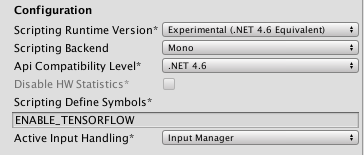

- Select Scripting Runtime Version to Experimental (.NET 4.6 Equivalent or .NET 4.x Equivalent)

- In Scripting Defined Symbols, add the flag

ENABLE_TENSORFLOW. After typing in the flag name, press Enter.

- Go to File > Save Project

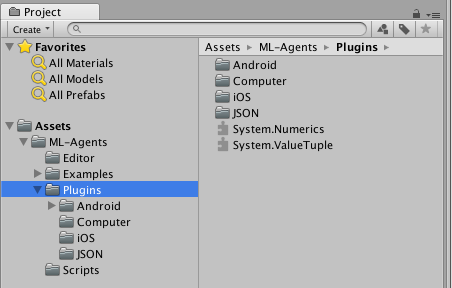

Download the TensorFlowSharp plugin. Then import it into Unity by double clicking the downloaded file. You can check if it was successfully imported by checking the TensorFlow files in the Project window under Assets > ML-Agents > Plugins > Computer.

Note: If you don't see anything under Assets, drag the

MLAgentsSDK/Assets/ML-Agents folder under Assets within Project window.

Running a Pre-trained Model

- In the Project window, go to

Assets/ML-Agents/Examples/3DBallfolder and open the3DBallscene file. - In the Hierarchy window, select the Ball3DBrain child under the Ball3DAcademy GameObject to view its properties in the Inspector window.

- On the Ball3DBrain object's Brain component, change the Brain Type to Internal.

- In the Project window, locate the

Assets/ML-Agents/Examples/3DBall/TFModelsfolder. - Drag the

3DBallmodel file from theTFModelsfolder to the Graph Model field of the Ball3DBrain object's Brain component. - Click the Play button and you will see the platforms balance the balls using the pretrained model.

Using the Basics Jupyter Notebook

The notebooks/getting-started.ipynb Jupyter notebook

contains a simple walkthrough of the functionality of the Python API. It can

also serve as a simple test that your environment is configured correctly.

Within Basics, be sure to set env_name to the name of the Unity executable

if you want to use an executable or to

None if you want to interact with the current scene in the Unity Editor.

More information and documentation is provided in the Python API page.

Training the Brain with Reinforcement Learning

Setting the Brain to External

Since we are going to build this environment to conduct training, we need to set the brain used by the agents to External. This allows the agents to communicate with the external training process when making their decisions.

- In the Scene window, click the triangle icon next to the Ball3DAcademy object.

- Select its child object Ball3DBrain.

- In the Inspector window, set Brain Type to External.

Training the environment

- Open a command or terminal window.

- Nagivate to the folder where you installed the ML-Agents toolkit.

- Run

mlagents-learn <trainer-config-path> --run-id=<run-identifier> --trainWhere:<trainer-config-path>is the relative or absolute filepath of the trainer configuration. The defaults used by environments in the ML-Agents SDK can be found inconfig/trainer_config.yaml.<run-identifier>is a string used to separate the results of different training runs- And the

--traintellsmlagents-learnto run a training session (rather than inference)

- When the message "Start training by pressing the Play button in the Unity Editor" is displayed on the screen, you can press the ▶️ button in Unity to start training in the Editor.

Note: Alternatively, you can use an executable rather than the Editor to perform training. Please refer to this page for instructions on how to build and use an executable.

Note: If you're using Anaconda, don't forget to activate the ml-agents environment first.

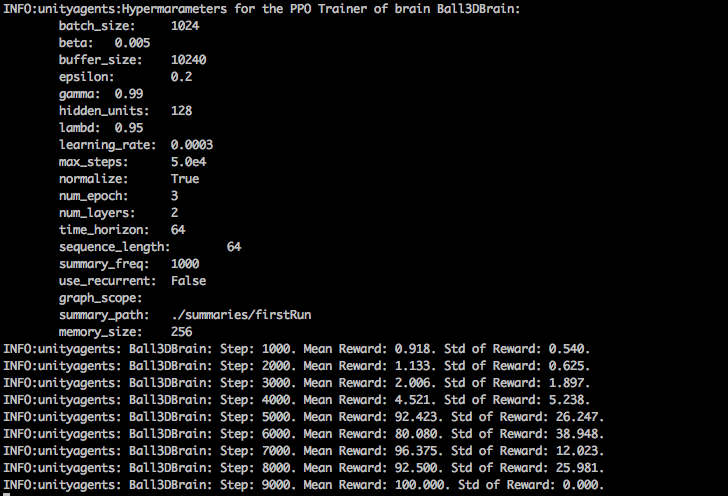

If mlagents-learn runs correctly and starts training, you should see something

like this:

After training

You can press Ctrl+C to stop the training, and your trained model will be at

models/<run-identifier>/editor_<academy_name>_<run-identifier>.bytes where

<academy_name> is the name of the Academy GameObject in the current scene.

This file corresponds to your model's latest checkpoint. You can now embed this

trained model into your internal brain by following the steps below, which is

similar to the steps described

above.

- Move your model file into

MLAgentsSDK/Assets/ML-Agents/Examples/3DBall/TFModels/. - Open the Unity Editor, and select the 3DBall scene as described above.

- Select the Ball3DBrain object from the Scene hierarchy.

- Change the Type of Brain to Internal.

- Drag the

<env_name>_<run-identifier>.bytesfile from the Project window of the Editor to the Graph Model placeholder in the Ball3DBrain inspector window. - Press the ▶️ button at the top of the Editor.

Next Steps

- For more information on the ML-Agents toolkit, in addition to helpful background, check out the ML-Agents Toolkit Overview page.

- For a more detailed walk-through of our 3D Balance Ball environment, check out the Getting Started page.

- For a "Hello World" introduction to creating your own learning environment, check out the Making a New Learning Environment page.

- For a series of Youtube video tutorials, checkout the Machine Learning Agents PlayList page.