# Making a New Learning Environment

This tutorial walks through the process of creating a Unity Environment from scratch. We recommend first reading the [Getting Started ](Getting-Started.md ) guide to understand the concepts presented here first in an already-built environment.

This tutorial walks through the process of creating a Unity Environment from

scratch. We recommend first reading the [Getting Started ](Getting-Started.md )

guide to understand the concepts presented here first in an already-built

environment.

In this example, we will create an agent capable of controlling a ball on a platform. We will then train the agent to roll the ball toward the cube while avoiding falling off the platform.

In this example, we will create an agent capable of controlling a ball on a

platform. We will then train the agent to roll the ball toward the cube while

avoiding falling off the platform.

## Overview

1. Create an environment for your agents to live in. An environment can range

from a simple physical simulation containing a few objects to an entire game

or ecosystem.

2 . Implement your Agent subclasses. An Agent subclass defines the code an Agent

uses to observe its environment, to carry out assigned actions, and to

calculate the rewards used for reinforcement training. You can also implement

optional methods to reset the Agent when it has finished or failed its task.

3 . Add your Agent subclasses to appropriate GameObjects, typically, the object

in the scene that represents the Agent in the simulation.

from a simple physical simulation containing a few objects to an entire game

or ecosystem.

1 . Implement your Agent subclasses. An Agent subclass defines the code an Agent

uses to observe its environment, to carry out assigned actions, and to

calculate the rewards used for reinforcement training. You can also implement

optional methods to reset the Agent when it has finished or failed its task.

1 . Add your Agent subclasses to appropriate GameObjects, typically, the object

in the scene that represents the Agent in the simulation.

**Note:** If you are unfamiliar with Unity, refer to

[Learning the interface ](https://docs.unity3d.com/Manual/LearningtheInterface.html )

The first task to accomplish is simply creating a new Unity project and

importing the ML-Agents assets into it:

1. Launch the Unity Editor and create a new project named "RollerBall".

2. Make sure that the Scripting Runtime Version for the project is set to use

**.NET 4.x Equivalent** (This is an experimental option in Unity 2017,

but is the default as of 2018.3.)

3. In a file system window, navigate to the folder containing your cloned

ML-Agents repository.

4. Open the `manifest.json` file in the `Packages` directory of your project.

Add the following line to your project's package dependencies:

```

"com.unity.ml-agents" : "file:< path_to_local_ml-agents_repo > /com.unity.ml-agents"

```

More information can be found in the [installation instructions ](Installation.md ) under

**Package Installation** .

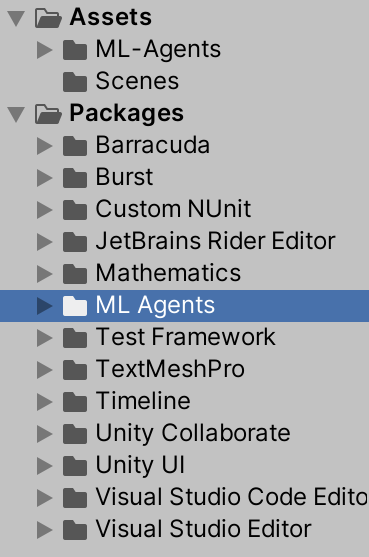

1. Launch Unity Hub and create a new 3D project named "RollerBall".

1. [Add the ML-Agents Unity package ](Installation.md#install-the-comunityml-agents-unity-package )

to your project.

< p align = "left" >

< img src = "images/roller-ball-projects.png"

alt="Project window"

width="250" border="10" />

< / p >

Next, we will create a very simple scene to act as our ML-Agents environment.

The "physical" components of the environment include a Plane to act as the floor

for the Agent to move around on, a Cube to act as the goal or target for the

agent to seek, and a Sphere to represent the Agent itself.

Next, we will create a very simple scene to act as our learning environment. The

"physical" components of the environment include a Plane to act as the floor for

the Agent to move around on, a Cube to act as the goal or target for the agent

to seek, and a Sphere to represent the Agent itself.

2. Name the GameObject "Floor."

3. Select the Floor Plane to view its properties in the Inspector window.

4. Set Transform to Position = (0, 0, 0), Rotation = (0, 0, 0), Scale = (1, 1, 1).

5. On the Plane's Mesh Renderer, expand the Materials property and change the

default-material to *GridMatFloor* (or any suitable material of your choice).

1. Name the GameObject "Floor."

1. Select the Floor Plane to view its properties in the Inspector window.

1. Set Transform to Position = `(0, 0, 0)` , Rotation = `(0, 0, 0)` , Scale =

`(1, 1, 1)` .

(To set a new material, click the small circle icon next to the current material

name. This opens the **Object Picker** dialog so that you can choose a

different material from the list of all materials currently in the project.)

< p align = "left" >

< img src = "images/roller-ball-floor.png"

alt="The Floor in the Inspector window"

width="400" border="10" />

< / p >

2. Name the GameObject "Target"

3. Select the Target Cube to view its properties in the Inspector window.

4. Set Transform to Position = (3, 0.5, 3), Rotation = (0, 0, 0), Scale = (1, 1, 1).

5. On the Cube's Mesh Renderer, expand the Materials property and change the

default-material to *AgentBlue* .

1. Name the GameObject "Target"

1. Select the Target Cube to view its properties in the Inspector window.

1. Set Transform to Position = `3, 0.5, 3)` , Rotation = `(0, 0, 0)` , Scale =

`(1, 1, 1)` .

< p align = "left" >

< img src = "images/roller-ball-target.png"

alt="The Target Cube in the Inspector window"

width="400" border="10" />

< / p >

2. Name the GameObject "RollerAgent"

3. Select the RollerAgent Sphere to view its properties in the Inspector window.

4. Set Transform to Position = (0, 0.5, 0), Rotation = (0, 0, 0), Scale = (1, 1, 1).

5. On the Sphere's Mesh Renderer, expand the Materials property and change the

default-material to *Checkers_Ball*.

6. Click **Add Component** .

7. Add the Physics/Rigidbody component to the Sphere.

1. Name the GameObject "RollerAgent"

1. Select the RollerAgent Sphere to view its properties in the Inspector window.

1. Set Transform to Position = `(0, 0.5, 0)` , Rotation = `(0, 0, 0)` , Scale =

`(1, 1, 1)` .

1. Click **Add Component** .

1. Add the `Rigidbody` component to the Sphere.

< p align = "left" >

< img src = "images/roller-ball-agent.png"

alt="The Agent GameObject in the Inspector window"

width="400" border="10" />

< / p >

Note that we will create an Agent subclass to add to this GameObject as a

component later in the tutorial.

Note that the screenshot above includes the `Roller Agent` script, which we will

create in the next section. However, before we do that, we'll first group the

floor, target and agent under a single, empty, GameObject. This will simplify

some of our subsequent steps.

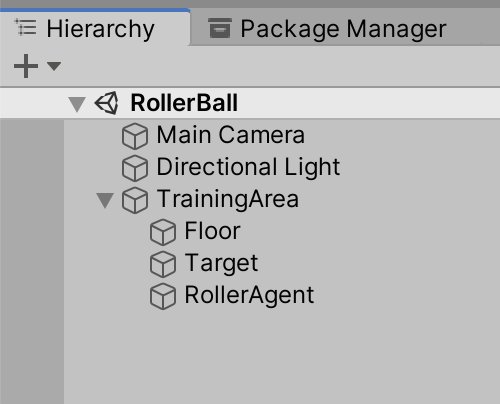

< p align = "left" >

< img src = "images/roller-ball-hierarchy.png"

alt="The Hierarchy window"

width="250" border="10" />

< / p >

To do so:

1. Right-click on your Project Hierarchy and create a new empty GameObject. Name

it TrainingArea.

1. Reset the TrainingArea’s Transform so that it is at `(0,0,0)` with Rotation

`(0,0,0)` and Scale `(1,1,1)` .

1. Drag the Floor, Target, and RollerAgent GameObjects in the Hierarchy into the

TrainingArea GameObject.

## Implement an Agent

2. Click **Add Component** .

3. Click **New Script** in the list of components (at the bottom).

4. Name the script "RollerAgent".

5. Click **Create and Add** .

1. Click **Add Component** .

1. Click **New Script** in the list of components (at the bottom).

1. Name the script "RollerAgent".

1. Click **Create and Add** .

2. In the editor, add the `using MLAgents;` and `using MLAgents.Sensors` statements and then change the base

class from `MonoBehaviour` to `Agent` .

3. Delete the `Update()` method, but we will use the `Start()` function, so

leave it alone for now.

1. In the editor, add the `using MLAgents;` and `using MLAgents.Sensors`

statements and then change the base class from `MonoBehaviour` to `Agent` .

1. Delete the `Update()` method, but we will use the `Start()` function, so

leave it alone for now.

to the cube using reinforcement learning.

to the cube using reinforcement learning. More specifically, we will need to

extend three methods from the `Agent` base class:

- `OnEpisodeBegin()`

- `CollectObservations(VectorSensor sensor)`

- `OnActionReceived(float[] vectorAction)`

We overview each of these in more detail in the dedicated subsections below.

When the Agent reaches its target, its episode ends and the `OnEpisodeBegin()`

method moves the target to a random location. In addition, if the Agent rolls

off the platform, the `OnEpisodeBegin()` method puts it back onto the floor.

The process of training in the ML-Agents Toolkit involves running episodes where

the Agent (Sphere) attempts to solve the task. Each episode lasts until the

Agents solves the task (i.e. reaches the cube), fails (rolls off the platform)

or times out (takes too long to solve or fail at the task). At the start of each

episode, the `OnEpisodeBegin()` method is called to set-up the environment for a

new episode. Typically the scene is initialized in a random manner to enable the

agent to learn to solve the task under a variety of conditions.

To move the target GameObject, we need a reference to its Transform (which

stores a GameObject's position, orientation and scale in the 3D world). To get

this reference, add a public field of type `Transform` to the RollerAgent class.

In this example, each time the Agent (Sphere) reaches its target (Cube), its

episode ends and the method moves the target (Cube) to a new random location. In

addition, if the Agent rolls off the platform, the `OnEpisodeBegin()` method

puts it back onto the floor.

To move the target (Cube), we need a reference to its Transform (which stores a

GameObject's position, orientation and scale in the 3D world). To get this

reference, add a public field of type `Transform` to the RollerAgent class.

To reset the Agent's velocity (and later to apply force to move the

agent) we need a reference to the Rigidbody component. A

To reset the Agent's velocity (and later to apply force to move the agent) we

need a reference to the Rigidbody component. A

[Rigidbody ](https://docs.unity3d.com/ScriptReference/Rigidbody.html ) is Unity's

primary element for physics simulation. (See

[Physics ](https://docs.unity3d.com/Manual/PhysicsSection.html ) for full

public Transform Target;

public override void OnEpisodeBegin()

{

if (this.transform.position.y < 0 )

if (this.transform.localPosition.y < 0 )

this.transform.position = new Vector3( 0, 0.5f, 0);

this.transform.localPosition = new Vector3( 0, 0.5f, 0);

Target.position = new Vector3(Random.value * 8 - 4,

0.5f,

Random.value * 8 - 4);

Target.localPosition = new Vector3(Random.value * 8 - 4,

0.5f,

Random.value * 8 - 4);

Next, let's implement the `Agent.CollectObservations(VectorSensor sensor)` method.

Next, let's implement the `Agent.CollectObservations(VectorSensor sensor)`

method.

### Observing the Environment

deciding what information to collect is to consider what you would need to

calculate an analytical solution to the problem.

In our case, the information our Agent collects includes:

* Position of the target.

```csharp

sensor.AddObservation(Target.position);

```

* Position of the Agent itself.

```csharp

sensor.AddObservation(this.transform.position);

```

* The velocity of the Agent. This helps the Agent learn to control its speed so

it doesn't overshoot the target and roll off the platform.

```csharp

// Agent velocity

sensor.AddObservation(rBody.velocity.x);

sensor.AddObservation(rBody.velocity.z);

```

In total, the state observation contains 8 values and we need to use the

continuous state space when we get around to setting the Brain properties:

In our case, the information our Agent collects includes the position of the

target, the position of the agent itself, and the velocity of the agent. This

helps the Agent learn to control its speed so it doesn't overshoot the target

and roll off the platform. In total, the agent observation contains 8 values as

implemented below:

sensor.AddObservation(Target.position);

sensor.AddObservation(this.transform.position);

sensor.AddObservation(Target.localPosition);

sensor.AddObservation(this.transform.localPosition);

// Agent velocity

sensor.AddObservation(rBody.velocity.x);

### Taking Actions and Assigning Rewards

receives the actions from the Brain and assigns the reward.

receives actions and assigns the reward.

### Actions

#### Actions

The decision of the Brain comes in the form of an action array passed to the

`OnActionReceived()` function. The number of elements in this array is determined by

the `Vector Action` `Space Type` and `Space Size` settings of the

agent's Brain. The RollerAgent uses the continuous vector action space and needs

two continuous control signals from the Brain. Thus, we will set the Brain

`Space Size` to 2. The first element,`action[0]` determines the force

applied along the x axis; `action[1]` determines the force applied along the z

axis. (If we allowed the Agent to move in three dimensions, then we would need

to set `Vector Action Size` to 3.) Note that the Brain really has no idea what the values in

the action array mean. The training process just adjusts the action values in

response to the observation input and then sees what kind of rewards it gets as

a result.

To solve the task of moving towards the target, the Agent (Sphere) needs to be

able to move in the `x` and `z` directions. As such, we will provide 2 actions

to the agent. The first determines the force applied along the x-axis; the

second determines the force applied along the z-axis. (If we allowed the Agent

to move in three dimensions, then we would need a third action.

The RollerAgent applies the values from the `action[]` array to its Rigidbody

component, `rBody` , using the `Rigidbody.AddForce` function:

rBody.AddForce(controlSignal * speed);

```

### Rewards

#### Rewards

Reinforcement learning requires rewards. Assign rewards in the `OnActionReceived()`

function. The learning algorithm uses the rewards assigned to the Agent during

the simulation and learning process to determine whether it is giving

the Agent the optimal actions. You want to reward an Agent for completing the

assigned task. In this case, the Agent is given a reward of 1.0 for reaching the

Target cube.

Reinforcement learning requires rewards. Assign rewards in the

`OnActionReceived()` function. The learning algorithm uses the rewards assigned

to the Agent during the simulation and learning process to determine whether it

is giving the Agent the optimal actions. You want to reward an Agent for

completing the assigned task. In this case, the Agent is given a reward of 1.0

for reaching the Target cube.

When it does, the code calls the `Agent.SetReward()` method to assign a

reward of 1.0 and marks the agent as finished by calling the `EndEpisode()` method

on the Agent.

When it does, the code calls the `Agent.SetReward()` method to assign a reward

of 1.0 and marks the agent as finished by calling the `EndEpisode()` method on

the Agent.

float distanceToTarget = Vector3.Distance(this.transform.position,

Target.position);

float distanceToTarget = Vector3.Distance(this.transform.localPosition, Target.localPosition);

// Reached target

if (distanceToTarget < 1.42f )

{

```

Finally, if the Agent falls off the platform, end the episode so that it can reset itself:

Finally, if the Agent falls off the platform, end the episode so that it can

reset itself:

if (this.transform.position.y < 0 )

if (this.transform.localPosition.y < 0 )

### OnActionReceived()

#### OnActionReceived()

With the action and reward logic outlined above, the final version of the

`OnActionReceived()` function looks like:

rBody.AddForce(controlSignal * speed);

// Rewards

float distanceToTarget = Vector3.Distance(this.transform.position,

Target.position);

float distanceToTarget = Vector3.Distance(this.transform.localPosition, Target.localPosition);

// Reached target

if (distanceToTarget < 1.42f )

}

// Fell off platform

if (this.transform.position.y < 0 )

if (this.transform.localPosition.y < 0 )

Note the `speed` class variable defined before the

function. Since `speed` is public, you can set the value from the Inspector

window.

Note the `speed` class variable is defined before the function. Since `speed` is

public, you can set the value from the Inspector window.

to connect everything together in the Unity Editor. This involves

changing some of the Agent Component's properties so that they are compatible

with our Agent code.

to connect everything together in the Unity Editor. This involves changing some

of the Agent Component's properties so that they are compatible with our Agent

code.

window.

2. Add the Decision Requester script with the Add Component button from the RollerAgent Inspector.

3. Change **Decision Period** to `10` .

4. Drag the Target GameObject from the Hierarchy window to the RollerAgent

Target field.

5. Add the Behavior Parameters script with the Add Component button from the RollerAgent Inspector.

6. Modify the Behavior Parameters of the Agent :

* `Behavior Name` to *RollerBallBrain*

* `Vector Observation` `Space Size` = 8

* `Vector Action` `Space Type` = * *Continuous**

* `Vector Action` `Space Size` = 2

window.

1. Add the `Decision Requester` script with the Add Component button from the

RollerAgent Inspector.

1. Change **Decision Period** to `10` .

1. Drag the Target GameObject from the Hierarchy window to the RollerAgent

Target field.

1. Add the `Behavior Parameters` script with the Add Component button from the

RollerAgent Inspector.

1. Modify the Behavior Parameters of the Agent :

- `Behavior Name` to _RollerBall_

- `Vector Observation` > `Space Size` = 8

- `Vector Action` > `Space Type` = **Continuous**

- `Vector Action` > `Space Size` = 2

It is always a good idea to test your environment manually before embarking on

an extended training run. To do so, you will need to implement the `Heuristic()`

method on the RollerAgent class. This will allow you control the Agent using

direct keyboard control.

The `Heuristic()` method will look like this :

It is always a good idea to first test your environment by controlling the Agent

using the keyboard. To do so, you will need to extend the `Heuristic()` method

in the `RollerAgent` class. For our example, the heuristic will generate an

action corresponding to the values of the "Horizontal" and "Vertical" input axis

(which correspond to the keyboard arrow keys):

public override void Heuristic(float[] actionsOut)

{

actionsOut[0] = Input.GetAxis("Horizontal");

actionsOut[1] = Input.GetAxis("Vertical");

}

public override void Heuristic(float[] actionsOut)

{

actionsOut[0] = Input.GetAxis("Horizontal");

actionsOut[1] = Input.GetAxis("Vertical");

}

What this code means is that the heuristic will generate an action corresponding

to the values of the "Horizontal" and "Vertical" input axis (which correspond to

the keyboard arrow keys).

In order for the Agent to use the Heuristic, You will need to set the `Behavior Type`

to `Heuristic Only` in the `Behavior Parameters` of the RollerAgent.

Press **Play** to run the scene and use the arrows keys to move the Agent around

the platform. Make sure that there are no errors displayed in the Unity editor

Console window and that the Agent resets when it reaches its target or falls

from the platform. Note that for more involved debugging, the ML-Agents SDK

includes a convenient Monitor class that you can use to easily display Agent

status information in the Game window.

In order for the Agent to use the Heuristic, You will need to set the

`Behavior Type` to `Heuristic Only` in the `Behavior Parameters` of the

RollerAgent.

Press :arrow_forward: to run the scene and use the arrows keys to move the Agent

around the platform. Make sure that there are no errors displayed in the Unity

Editor Console window and that the Agent resets when it reaches its target or

falls from the platform. Note that for more involved debugging, the ML-Agents

SDK includes a convenient [Monitor ](Feature-Monitor.md ) class that you can use

to easily display Agent status information in the Game window.

The process is

the same as described in [Training ML-Agents ](Training-ML-Agents.md ). Note that the

models will be created in the original ml-agents project folder, `ml-agents/models` .

The process is the same as described in the

[Getting Started Guide ](Getting-Started.md ).

The hyperparameters for training are specified in the configuration file that you

pass to the `mlagents-learn` program. Using the default settings specified

in the original `ml-agents/config/trainer_config.yaml` file, the

RollerAgent takes about 300,000 steps to train. However, you can change the

following hyperparameters to speed up training considerably (to under 20,000 steps):

The hyperparameters for training are specified in a configuration file that you

pass to the `mlagents-learn` program. Create a new `rollerball_config.yaml` file

and include the following hyperparameter values:

batch_size: 10

buffer_size: 100

Since this example creates a very simple training environment with only a few inputs

and outputs, using small batch and buffer sizes speeds up the training considerably.

However, if you add more complexity to the environment or change the reward or

observation functions, you might also find that training performs better with different

hyperparameter values.

**Note:** In addition to setting these hyperparameter values, the Agent

**DecisionFrequency** parameter has a large effect on training time and success.

A larger value reduces the number of decisions the training algorithm has to consider and,

in this simple environment, speeds up training.

To train in the editor, run the following Python command from a Terminal or Console

window before pressing play:

```yml

RollerBall:

trainer: ppo

batch_size: 10

beta: 5.0e-3

buffer_size: 100

epsilon: 0.2

hidden_units: 128

lambd: 0.95

learning_rate: 3.0e-4

learning_rate_schedule: linear

max_steps: 5.0e4

normalize: false

num_epoch: 3

num_layers: 2

time_horizon: 64

summary_freq: 10000

use_recurrent: false

reward_signals:

extrinsic:

strength: 1.0

gamma: 0.99

```

mlagents-learn config/config.yaml --run-id=RollerBall-1

Since this example creates a very simple training environment with only a few

inputs and outputs, using small batch and buffer sizes speeds up the training

considerably. However, if you add more complexity to the environment or change

the reward or observation functions, you might also find that training performs

better with different hyperparameter values. In addition to setting these

hyperparameter values, the Agent **DecisionFrequency** parameter has a large

effect on training time and success. A larger value reduces the number of

decisions the training algorithm has to consider and, in this simple

environment, speeds up training.

(where `config.yaml` is a copy of `trainer_config.yaml` that you have edited

to change the `batch_size` and `buffer_size` hyperparameters for your trainer.)

To train your agent, run the following command before pressing :arrow_forward:

in the Editor:

**Note:** If you get a `command not found` error when running this command, make sure

that you have followed the *Install Python and mlagents Package* section of the

ML-Agents [Installation ](Installation.md ) instructions.

mlagents-learn config/rollerball_config.yaml --run-id=RollerBall

To monitor the statistics of Agent performance during training, use

[TensorBoard ](Using-Tensorboard.md ).

In particular, the *cumulative_reward* and *value_estimate* statistics show how

In particular, the _cumulative_reward_ and _value_estimate_ statistics show how

has successfully *solved* the problem.

**Note:** If you use TensorBoard, always increment or change the `run-id`

you pass to the `mlagents-learn` command for each training run. If you use

the same id value, the statistics for multiple runs are combined and become

difficult to interpret.

has successfully _solved_ the problem.

In many of the [example environments ](Learning-Environment-Examples.md ), many copies of

the training area are instantiated in the scene. This generally speeds up training,

allowing the environment to gather many experiences in parallel. This can be achieved

simply by instantiating many Agents which share the `Behavior Parameters` . Use the following steps to

parallelize your RollerBall environment.

In many of the [example environments ](Learning-Environment-Examples.md ), many

copies of the training area are instantiated in the scene. This generally speeds

up training, allowing the environment to gather many experiences in parallel.

This can be achieved simply by instantiating many Agents with the same

`Behavior Name` . Note that we've already simplified our transition to using

multiple areas by creating the `TrainingArea` GameObject and relying on local

positions in `RollerAgent.cs` . Use the following steps to parallelize your

RollerBall environment:

### Instantiating Multiple Training Areas

1. Right-click on your Project Hierarchy and create a new empty GameObject.

Name it TrainingArea.

2. Reset the TrainingArea’s Transform so that it is at (0,0,0) with Rotation (0,0,0)

and Scale (1,1,1).

3. Drag the Floor, Target, and RollerAgent GameObjects in the Hierarchy into the

TrainingArea GameObject.

4. Drag the TrainingArea GameObject, along with its attached GameObjects, into your

Assets browser, turning it into a prefab.

5. You can now instantiate copies of the TrainingArea prefab. Drag them into your scene,

positioning them so that they do not overlap.

### Editing the Scripts

You will notice that in the previous section, we wrote our scripts assuming that our

TrainingArea was at (0,0,0), performing checks such as `this.transform.position.y < 0`

to determine whether our agent has fallen off the platform. We will need to change

this if we are to use multiple TrainingAreas throughout the scene.

A quick way to adapt our current code is to use

localPosition rather than position, so that our position reference is in reference

to the prefab TrainingArea's location, and not global coordinates.

1. Replace all references of `this.transform.position` in RollerAgent.cs with `this.transform.localPosition` .

2. Replace all references of `Target.position` in RollerAgent.cs with `Target.localPosition` .

This is only one way to achieve this objective. Refer to the

[example environments ](Learning-Environment-Examples.md ) for other ways we can achieve relative positioning.

1. Drag the TrainingArea GameObject, along with its attached GameObjects, into

your Assets browser, turning it into a prefab.

1. You can now instantiate copies of the TrainingArea prefab. Drag them into

your scene, positioning them so that they do not overlap.