|

|

4 年前 | |

|---|---|---|

| Assets | 4 年前 | |

| Packages | 4 年前 | |

| ProjectSettings | 4 年前 | |

| .gitignore | 4 年前 | |

| LICENSE | 4 年前 | |

| README.md | 4 年前 | |

README.md

This repo is intended to provide more advanced demos for AR Foundation outside of the Samples Repo.

For questions and issues related to AR Foundation please post on the AR Foundation Sample issues and NOT in this repo. You can also post on the AR Foundation Forums

arfoundation-demos

AR Foundation demo projects.

Demo projects that use AR Foundation 3.0 and demonstrate more advanced functionality around certain features

This set of demos relies on five Unity packages:

- ARSubsystems (documentation)

- ARCore XR Plugin (documentation)

- ARKit XR Plugin (documentation)

- ARKit Face Tracking (documentation)

- ARFoundation (documentation)

ARSubsystems defines an interface, and the platform-specific implementations are in the ARCore and ARKit packages. ARFoundation turns the AR data provided by ARSubsystems into Unity GameObjects and MonoBehavours.

The master branch is compatible with Unity 2019.3

Image Tracking — Also available on the asset store here

A sample app showing off how to use Image Tracking to track multiple unique images and spawn unique prefabs for each image.

The script ImageTrackingObjectManager.cs. handles storing prefabs and updating them based on found images. It links into the ARTrackedImageManager.trackedImagesChanged callback to spawn prefabs for each tracked image, update their position, show a visual on the prefab depending on it's tracked state and destroy it if removed.

The project contains two unique images one.png two.png which can be printed out or displayed on digital devices. The images are 2048x2048 pixels with a real world size of 0.2159 x 0.2159 meters.

The Prefabs for each number are prefab variants derived from OnePrefab.prefab. They use a small quad that uses the MobileARShadow.shader in order to accurately show a shadow of the 3D number.

The script DistanceManager.cs checks the distances between the tracked images and displays an additional 3D model between them when they reach a certain proximity.

the script NumberManager.cs handles setting up a contraint (in this case used to billboard the model) on the 3D number objects and provides a function to enable and disabling the rendering of the 3D model.

Missing Prefab in ImageTracking scene.

If you import the image tracking package or download it from the asset store without the Onboarding UX there will be a Missing Prefab in your scene. This prefab is a configured ScreenSpaceUI prefab from the Onboarding UX. It is configured with the UI for finding an image with the goal of finding an image.

UX — Also available on the asset store here

A UI / UX framework for providing guidance to the user for a variety of different types of mobile AR apps.

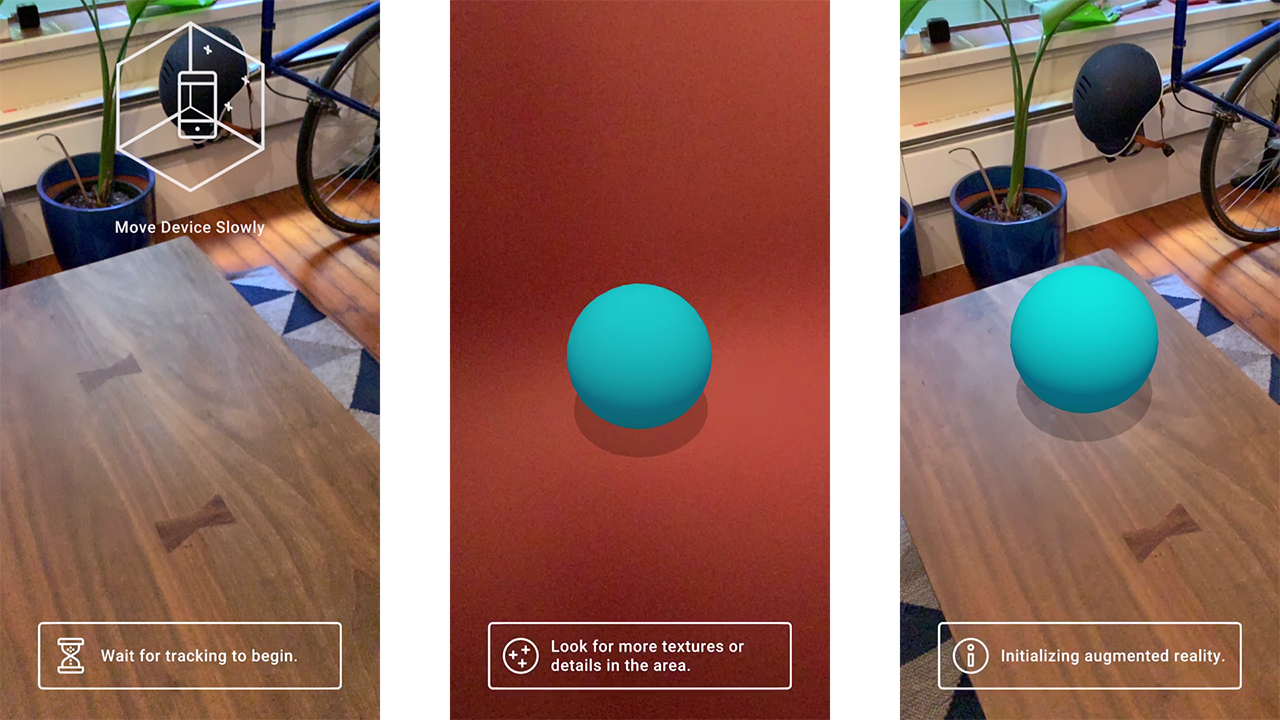

The framework adopts the idea of having instructional UI shown with an instructional goal in mind. One common use of this is UI instructing the user to move their device around with the goal of the user to find a plane. Once the goal is reached the UI fades out. There is also a secondary instruction UI and an API that allows developers to add any number of additional UI and goals that will go into a queue and be processed one at a time.

A common two step UI / Goal is to instruct the user to find a plane. Once a plane is found you can instruct the user to tap in order to place an object. Once an object is placed fade out the UI.

The instructional UI consist of the following animations / videos

- Cross Platform Find a plane

- Find a Face

- Find a Body

- Find an Image

- Find an Object

- ARKit Coaching Overlay

- Tap to Place

- None

All of the instructional UI (except the ARKit coaching overlay) is an included .webm video encoded with VP8 codec in order to support transparency.

With the following goals to fade out UI

- Found a plane

- Found Multiple Planes

- Found a Face

- Found a Body

- Found an Image

- Found an Object

- Placed an Object

- None

The goals are checking the associated ARTrackableManager number of trackables count. One thing to note is this is just looking for a trackable to be added, it does not check the tracking state of said trackable.

The script UIManager.cs is used to configure the Instructional Goals, secondary instructional goals and holds references to the different trackable managers.

UIManager manages a queue of UXHandle which allows any instructional UI with any goal to be dynamically added at runtime. To do this you can store a reference to the UIManager and call AddToQueue() passing in a UXHandle object. For testing purposes to visualize every UI video I use the following setup.

m_UIManager = GetComponent<UIManager>();

m_UIManager.AddToQueue(new UXHandle(UIManager.InstructionUI.CrossPlatformFindAPlane, UIManager.InstructionGoals.PlacedAnObject));

m_UIManager.AddToQueue(new UXHandle(UIManager.InstructionUI.FindABody, UIManager.InstructionGoals.PlacedAnObject));

m_UIManager.AddToQueue(new UXHandle(UIManager.InstructionUI.FindAFace, UIManager.InstructionGoals.PlacedAnObject));

m_UIManager.AddToQueue(new UXHandle(UIManager.InstructionUI.FindAnImage, UIManager.InstructionGoals.PlacedAnObject));

m_UIManager.AddToQueue(new UXHandle(UIManager.InstructionUI.FindAnObject, UIManager.InstructionGoals.PlacedAnObject));

m_UIManager.AddToQueue(new UXHandle(UIManager.InstructionUI.ARKitCoachingOverlay, UIManager.InstructionGoals.PlacedAnObject));

There's a m_CoachingOverlayFallback used in order to enable the ARKit coaching overlay on supported devices but fall back to Cross Platform Find a Plane when it is not.

The script ARUXAnimationManager.cs holds references to all the videos, controls all the logic for fading the UI in and out, managing the video swapping and swapping the associated text with each video / UI.

The script DisableTrackedVisuals holds a reference to the ARPlaneManger and ARPointCloudManager to allow for disabling both the spawned objects from the managers and the managers themselves, preventing further plane tracking or feature point (point clouds) tracking.

Tracking Reasons

When the session (device) is not tracking or has lost tracking there are a variety of different reasons why. It can be helpful to show these reasons to users so they better understand the experience or what may be hindering it.

Both ARKit and ARCore have slightly different reasons but in AR Foundation these are surfaced through the same shared API.

The ARUXReasonsManager.cs handles the visualization of the states and subscribes to the state change on the ARSession. The reasons are set and the display text and icon are changed in the SetReaons() method. Here I treat both Initializing and Relocalizing the same and for english display Initializing augmented reality.

Localization

If you want to use localization make sure to read the required addressables building documentation at the end of this section.

The Instructional UI and the Reasons have localization support through the Unity localization package. It's enabled for the the instructional UI in AR UX Animation Manager with the m_LocalizeText bool and with reasons in the AR UX Reasons Manager with the m_LocalizeText bool.

Localization currently supports the following languages

- English

- French

- German

- Italian

- Spanish

- Portuguese

- Russian

- Simplified Chinese

- Korean

- Japanese

- Hindi

Tamil and Telugu translations are available but due to font rendering complexities are not enabled currently

The localizations are supported through a CSV that is imported into the project and parsed into the proper localization table via StringImporter.cs.

If you would like to help out, have a suggestion for a better translation or want to add additional languges please reach out and comment on this publicly available Sheet

In the scene Localization is driven by the script LocalizationManager.cs which has a SupportedLanguages enum for each supported language. The current implementation only supports selecting and setting a language at compile time and NOT at runtime. This is because the selected language from the enum is set in the Start() method of LocalizationManager.cs.

After the language is set the localized fields are retrieved from the tables based on specific keys for each value and then referenced in the AR UX Animation Manager and AR UX Reasons Manager.

Many languages require unique fonts in order to properly render the characters for these languages the font's are swapped at runtime along with language specific settings in SwapFonts()

Packing Asset bundles for building localization support

The Localization package uses Addressables to organize and pack the translated strings. There are some additional steps required to properly build these for your application. If you're localizing the text for the instructions or the reasons you will need to do these steps.

- Open the Addressables Groups window (Window / Asset Management / Addressables / Groups)

- In the Addressables Groups Window click on the Build Tab / New Build / Default Build Script

- You will need to do this for every platform you are building for. (Once for Android and once for iOS).